Published

- 9 min read

Mastering AI Agent Design Patterns: From Theory to Production

Introduction

The landscape of artificial intelligence is undergoing a massive paradigm shift. We are rapidly moving away from zero-shot, conversational LLM wrappers toward autonomous, multi-step agentic systems. As AI Engineers, we are no longer just tweaking prompts; we are designing complex, stateful architectures where language models act as reasoning engines orchestrating tools, memory, and parallel execution threads. But as with any evolving software engineering discipline, building reliable agents requires moving past ad-hoc scripts and embracing standardized architectures. This brings us to the critical concept of AI Agent Design Patterns.

In this comprehensive guide, we are going to explore the foundational patterns required to build robust, scalable AI agents. We will draw heavily from the definitive new book, Agentic Design Patterns and deep dive into the architectures that are defining the next generation of software. Furthermore, I will share some of my own personal, field-tested design patterns—concepts I’ve sketched out in my own notes while building production-grade LLM systems.

Whether you are building coding assistants, automated researchers, or autonomous customer support agents, mastering these patterns is the key to moving your AI projects from fragile prototypes to highly reliable production systems.

Before we dive into the specific architectures, we need to talk about the book that is standardizing our field: Agentic Design Patterns.

For a long time, the AI engineering community relied on fragmented blog posts and Twitter threads to figure out how to build agents. This book changes that. Across its 11 chapters, it meticulously categorizes the design patterns that govern agentic behavior. It covers everything from basic Prompt Chaining (Chapter 1) to complex Goal Setting and Monitoring (Chapter 11) and the newly emerging Model Context Protocol (MCP).

What makes this book essential is that it treats AI agents as distributed software systems. It acknowledges that LLMs are stochastic and prone to hallucination, and it provides the architectural blueprints to mitigate these flaws using engineering rigor.

Let’s break down some of the core patterns detailed in the book and how you can implement them.

1. Core AI Agent Design Patterns

1.1. Prompt Chaining & Routing

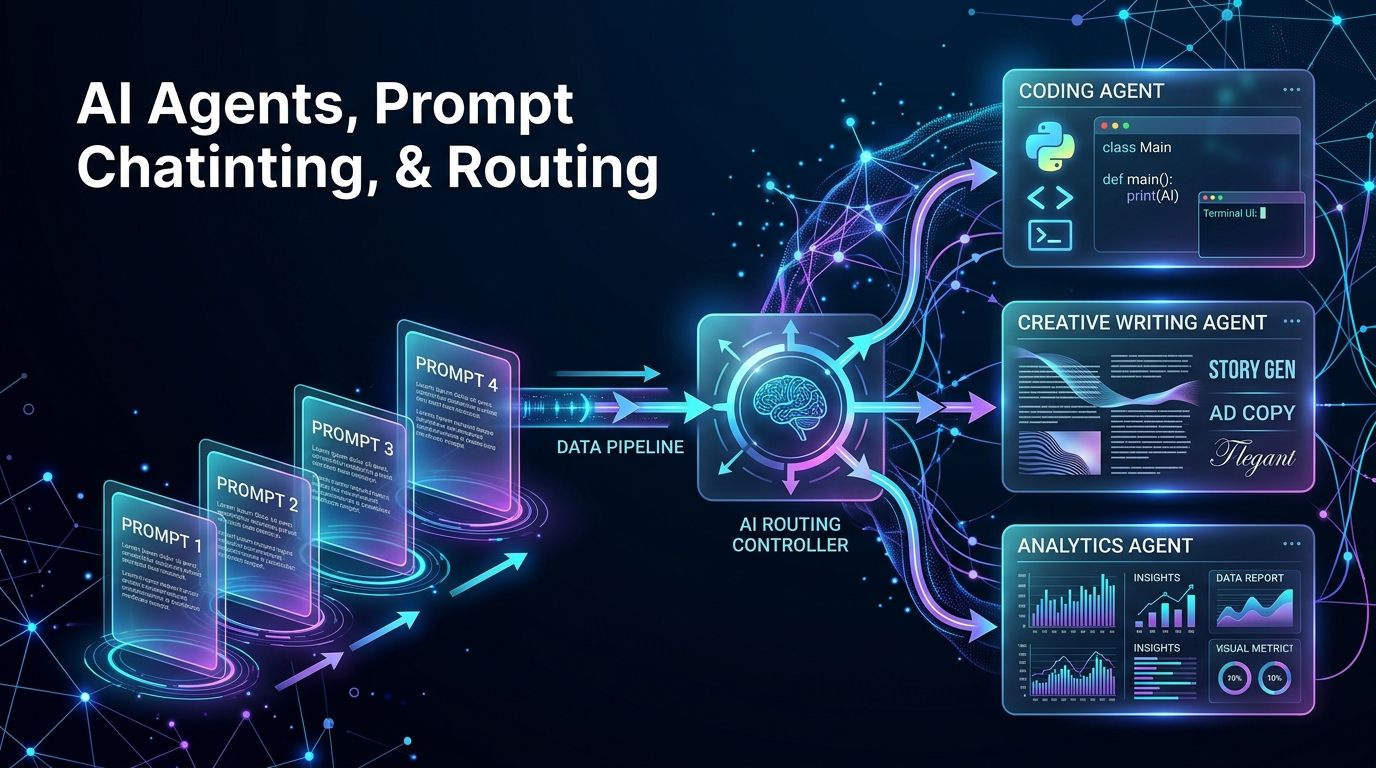

The most fundamental shift in agentic design is the realization that a single prompt is rarely sufficient for complex tasks. Prompt Chaining (Chapter 1) is the pattern of breaking down a massive cognitive load into smaller, sequential tasks. Instead of asking an LLM to “research a topic, write a report, and format it in markdown,” you chain three distinct prompts. The output of Prompt A becomes the input of Prompt B.

Routing (Chapter 2) complements this by acting as the traffic controller. A router pattern uses a fast, lightweight model to classify the user’s intent and direct the request to a specialized sub-agent. If a user asks a complex math question, the router sends it to a Python-equipped agent. If they ask for creative writing, it routes to an agent optimized for prose.

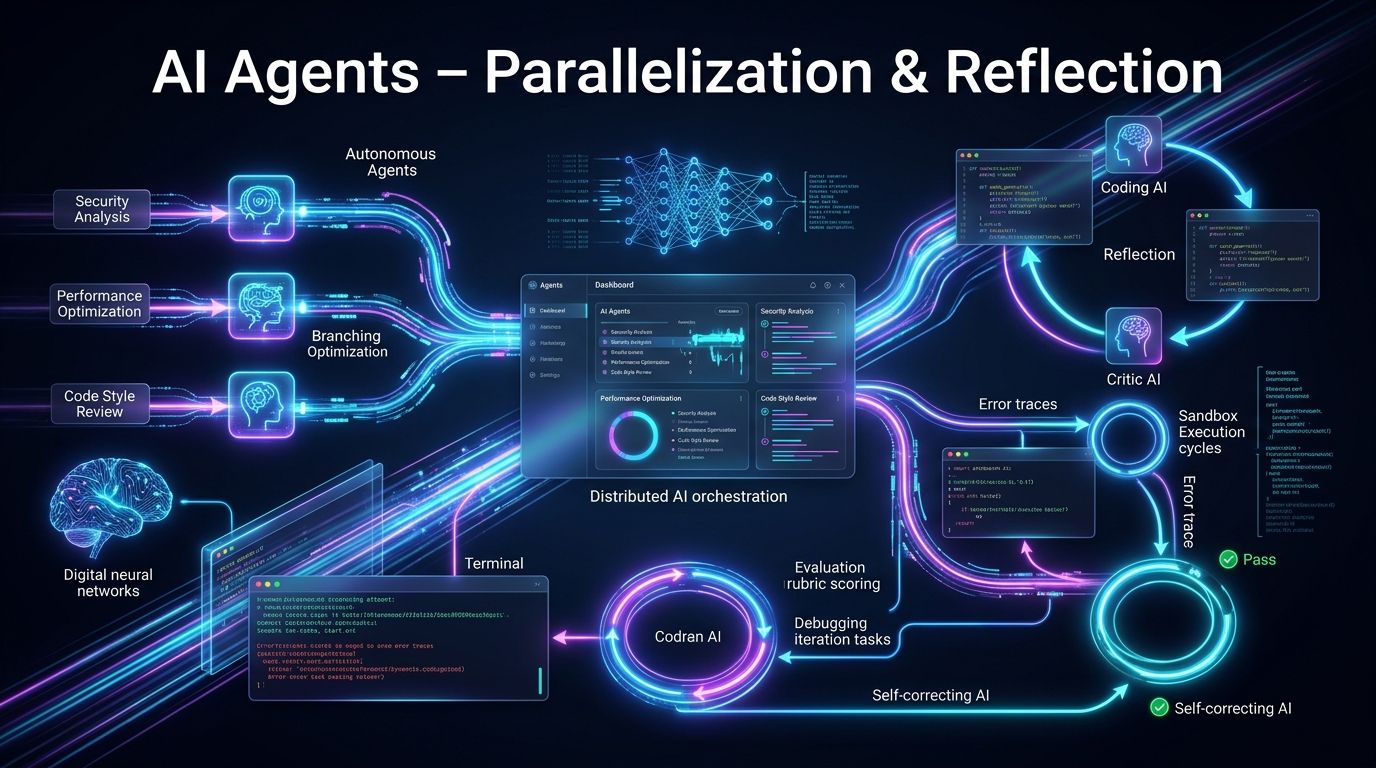

1.2. Parallelization & Reflection

Speed and accuracy are the twin pillars of good AI engineering. Parallelization (Chapter 3) involves fanning out tasks to multiple agents simultaneously. For example, if you are building an agent to review a pull request, you don’t need to evaluate security, performance, and style sequentially. You can dispatch three parallel agentic calls.

Reflection (Chapter 4) is arguably the most powerful pattern for increasing output quality. In this pattern, the agent generates a draft, and then a critic agent (or the same agent with a different system prompt) reviews the output against a specific rubric.

Consider a coding agent:

- Agent A generates Python code.

- The code is executed in a sandbox.

- If it throws an error, the error trace is fed back into Agent A (Reflection).

- The loop continues until the tests pass or a maximum iteration limit (imax) is reached.

1.3. Tool Use & Planning

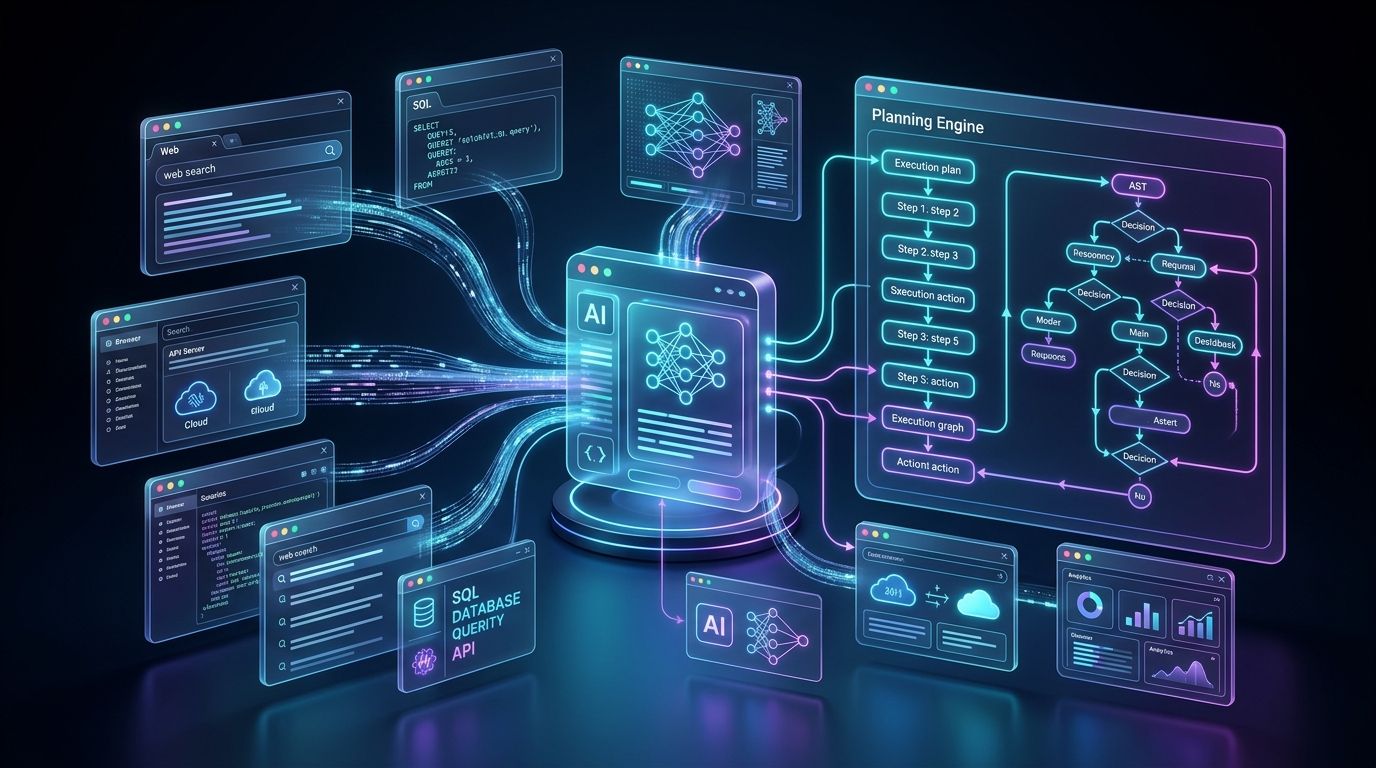

LLMs are inherently constrained by their training data cutoff. Tool Use (Chapter 5) gives them limbs. By providing the model with a schema of available functions (like web search, SQL execution, or API calls), the model can decide when to fetch external data.

However, chaotic tool use leads to infinite loops and wasted tokens. This is where Planning (Chapter 6) comes in. Before executing any tools, a planning agent writes out a step-by-step execution graph. Think of it as generating an Abstract Syntax Tree (AST) for real-world actions. The agent commits to a plan, executes step one, observes the result, and updates the plan.

2. My Key Perspectives: Essential Patterns from the Field

While the book provides the standard library of agentic patterns, building these systems in production often requires bespoke architectural tweaks. Drawing from my own handwritten design notes and field experience, here are three crucial patterns that bridge the gap between theory and reality.

2.1. The “Contextual Funnel” Pattern

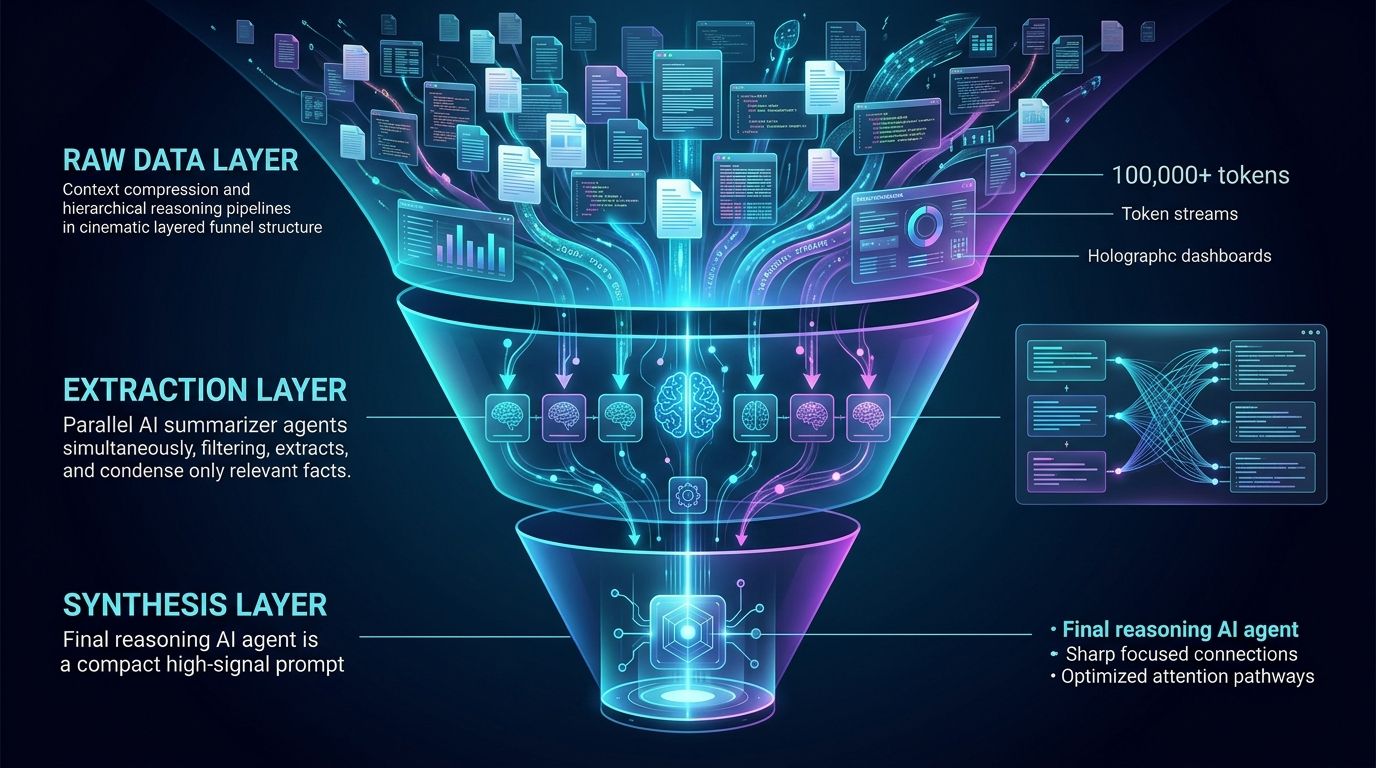

One of the biggest issues AI engineers face is context window bloat. When an agent is doing extensive research, it accumulates massive amounts of text, eventually degrading its reasoning capabilities (the “lost in the middle” phenomenon).

The Contextual Funnel pattern solves this. Instead of appending every observation to the main agent’s scratchpad, you use a summarizer agent at each step.

- Raw Data Layer: The agent scrapes 50 pages of documentation (e.g., 100,000 tokens).

- Extraction Layer: A map-reduce parallel process extracts only the facts relevant to the user’s query, reducing the context to 10,000 tokens.

- Synthesis Layer: The final reasoning agent operates on a dense, high-signal prompt of just 2,000 tokens.

By mathematically bounding the input size, you guarantee that the final reasoning agent maintains a high attention score across all provided facts.

2.2. The “Fallback Cascade” Pattern

APIs fail. Models go down. Rate limits are exceeded. The Fallback Cascade pattern is an engineering necessity. When building a tool-calling agent, you must design for deterministic failure.

If an agent attempts to use a Search_Web tool and the search API times out, the agent should not just throw a 500 error to the user. The Fallback Cascade dictates that:

- The system catches the error.

- The system injects a system-level message into the agent’s context:

"Tool execution failed due to network timeout. Please attempt to answer using your internal knowledge, or use the alternative tool: Search_Wikipedia." - If the primary LLM (e.g., GPT) fails due to rate limits, the system automatically seamlessly swaps the router configuration to a secondary model (e.g., Claude) with the exact same conversational state.

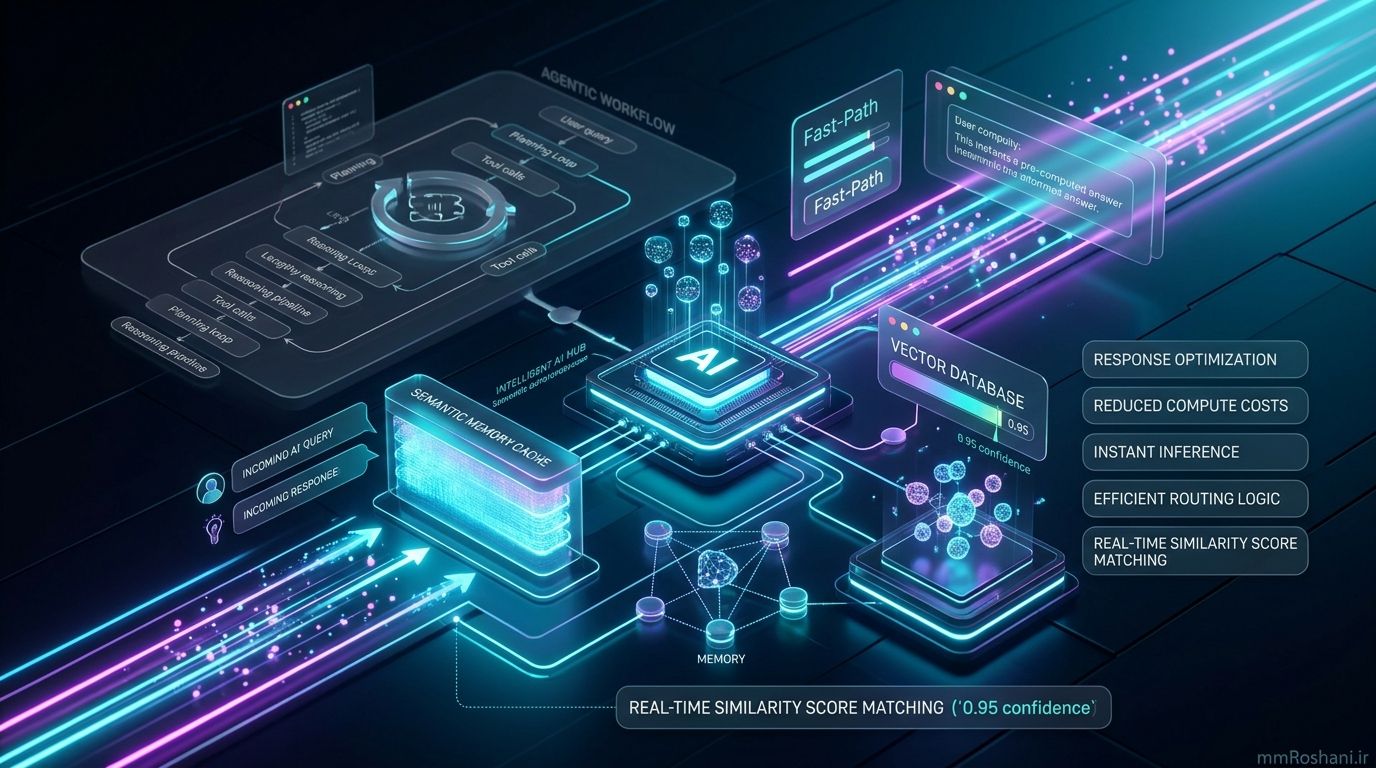

2.3. The “Semantic Caching & Fast-Path” Pattern

Running complex agentic loops is expensive and slow. If a user asks an autonomous research agent a question it has already solved for another user yesterday, it shouldn’t spin up a 5-minute planning and tool-calling loop.

If the similarity score exceeds a threshold (e.g., 0.95), the system routes the user to the “Fast-Path,” instantly returning the pre-computed agentic output. This hybrid design pattern drastically reduces compute costs while making the system feel incredibly responsive.

3. Advanced Architecture: Multi-Agent Systems & Memory

As you scale your AI engineering efforts, you will inevitably move past single-agent architectures into ecosystems of interacting agents.

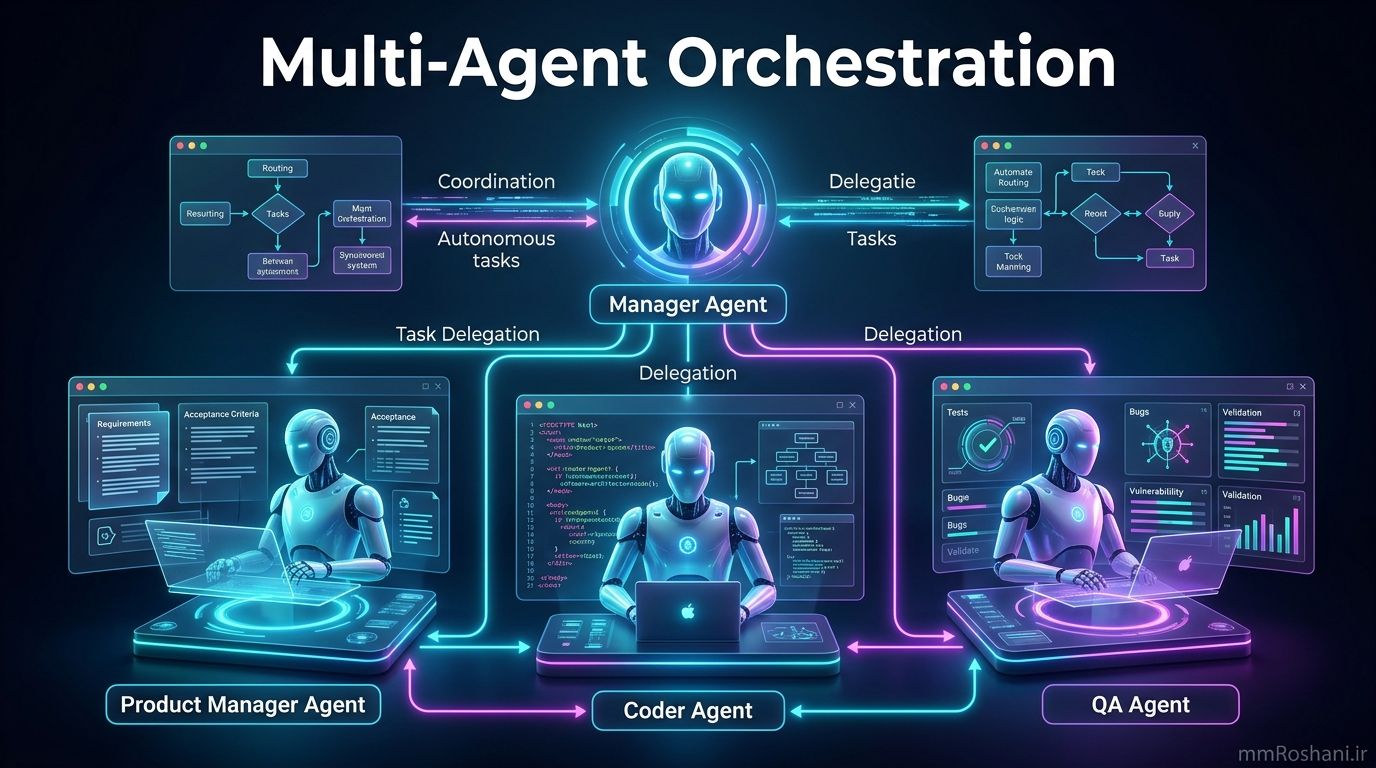

3.1. Multi-Agent Orchestration

Building a Multi-Agent System (MAS) is similar to structuring a corporate hierarchy (Chapter 7). You have a “Manager” agent responsible for user interaction and goal setting. The Manager delegates tasks to “Specialist” agents.

For example, a Software Development MAS might include:

- The Product Manager Agent: Writes requirements and acceptance criteria.

- The Coder Agent: Writes the implementation.

- The QA Agent: Writes and executes tests. This separation of concerns ensures that each agent has a focused system prompt. A model tasked only with finding security vulnerabilities will perform significantly better than a model tasked with writing the code and checking its own work.

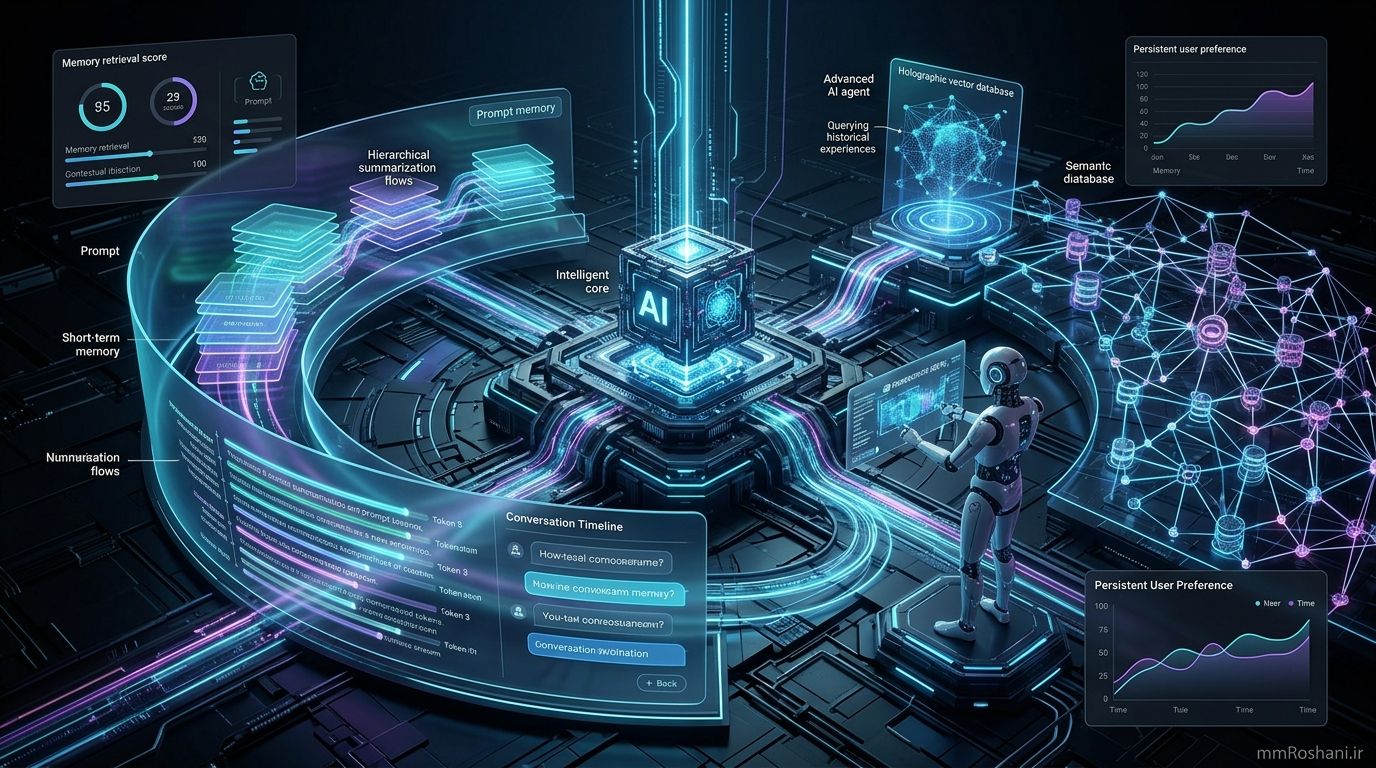

3.2. Memory Management (Statefulness)

True autonomy requires memory (Chapter 8). An agent must remember what it did 5 minutes ago (Short-term memory) and what the user prefers based on interactions from 5 months ago (Long-term memory).

- Short-term memory is typically managed via the context window. As the context fills up, engineers must implement rolling windows or hierarchical summarization to keep the prompt size manageable.

- Long-term memory relies on Vector Databases and Graph Databases. When an agent boots up, it queries the vector database for relevant past experiences, injecting them into the system prompt before taking action.

3.3. Model Context Protocol (MCP)

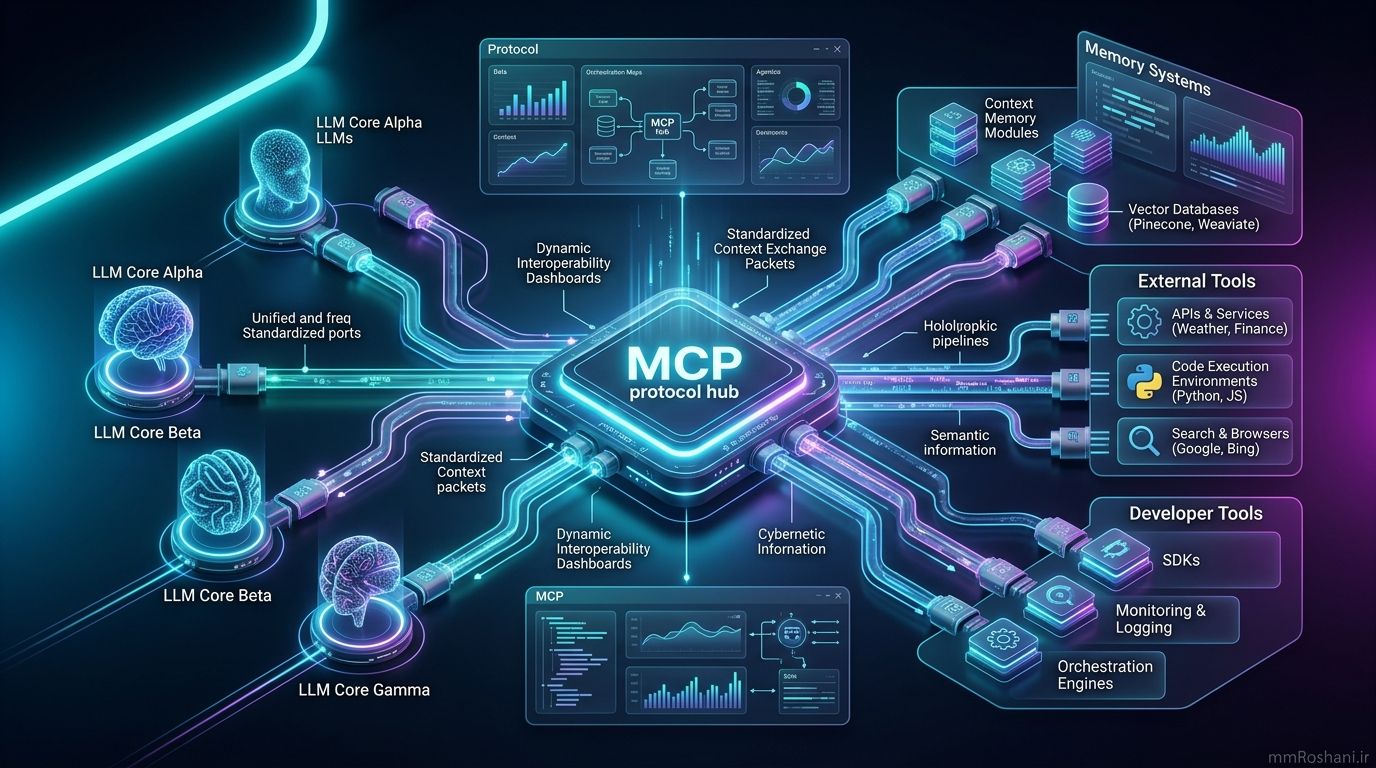

One of the most exciting developments covered in the book is the Model Context Protocol (MCP). As we build more tools and agents, integrating them becomes a networking nightmare (Chapter 10). MCP aims to standardize how context, memory, and tools are exposed to models. By adopting standardized protocols, AI engineers can plug-and-play different memory modules and toolsets without rewriting the connective middleware every time a new LLM is released.

4. Best Practices for Implementing Agentic Patterns

To wrap up, integrating these design patterns requires a shift in how we handle DevOps and monitoring.

- Observability is Non-Negotiable: When an agent goes off the rails, you need to know exactly which step in the chain failed. Tools like LangSmith, LangFuse or Weights & Biases are crucial. You must log the input, output, latency, and token usage for every single node in your agentic graph.

- Goal Setting and Monitoring (Chapter 11): Agents can get stuck in infinite loops (e.g., trying to compile code, failing, attempting the same fix, failing again). Always implement a strict hyperparameter for

max_iterationsand a budget formax_tokens. - Human-in-the-Loop (HITL): For high-stakes actions (like sending an email to a client), design your agent to pause and request human authorization. The agent prepares the payload, but a human pushes the button.

5. Conclusion

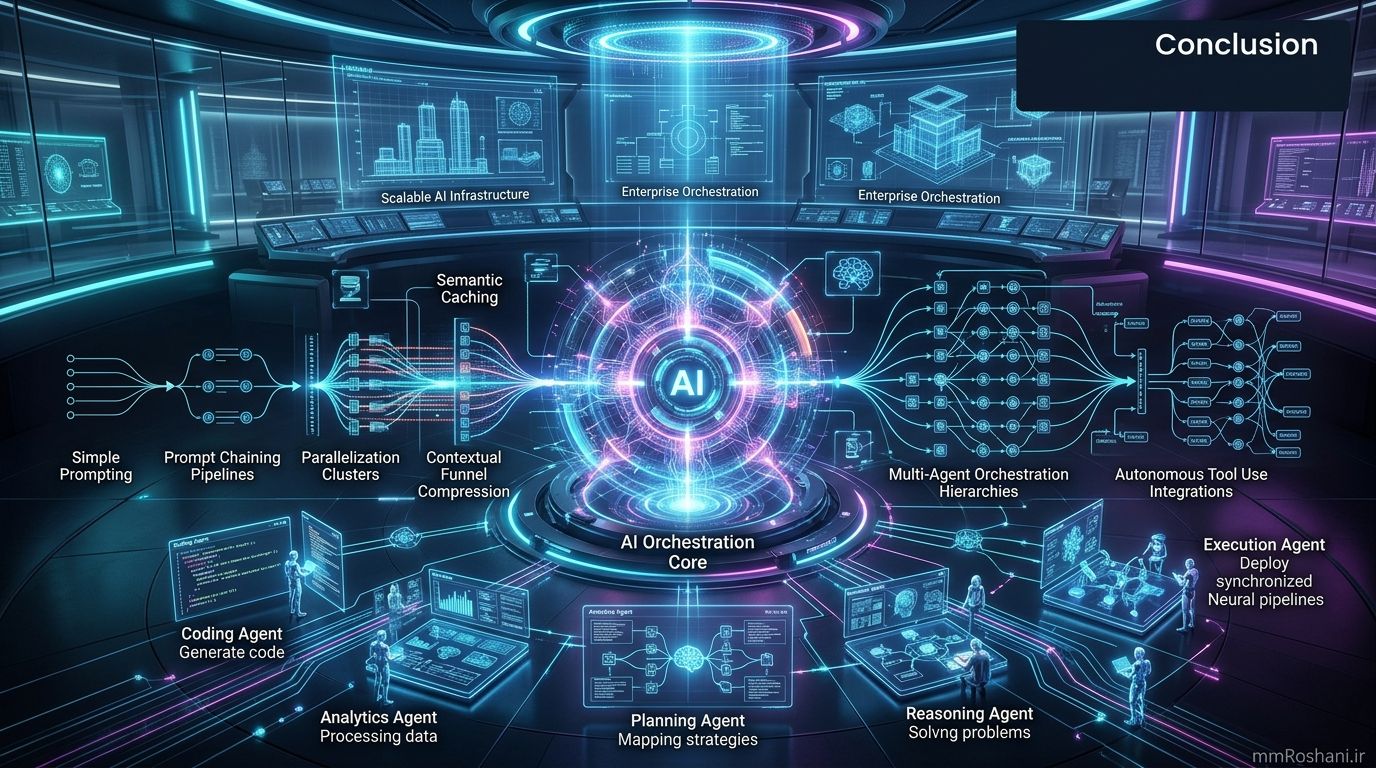

We are moving past the era of raw prompting and entering the era of AI Engineering architecture. By embracing the principles outlined in Agentic Design Patterns—Prompt Chaining, Parallelization, Tool Use, and Multi-Agent Orchestration—and combining them with field-tested optimizations like Contextual Funnels and Semantic Caching, we can build robust, highly autonomous systems.

The future of software is agentic. It is no longer about whether LLMs can write code or fetch data; it is about how we architect the systems that manage them.

I highly recommend picking up a copy of Agentic Design Patterns to dive deeper into the 424 pages of architectural blueprints.

What agentic design patterns are you currently experimenting with in your projects?

Let me know in the comments below, or subscribe to the newsletter for more deep dives into production-grade AI engineering!